- Holo2-4B: fully open under Apache 2.0

- Holo2-8B: fully open under Apache 2.0

- Holo2-30B-A3B: research-only license (non-commercial). For commercial use, please contact us.

- Developed by: H Company

- Model type: Vision-Language Model for Navigation and Computer Use Agents

- Fine-tuned from model: Qwen/Qwen3-VL-4B-Thinking

- Blog Post: https://www.hcompany.ai/holo2

- License: Apache 2.0 License

Get Started with the Model

Please have a look at the cookbook in our repo where we provide examples for both self-hosting and API use!Training Strategy

Our models are trained using high-quality proprietary data for UI understanding and action prediction, following a multi-stage training pipeline. The training dataset is a carefully curated mix of open-source datasets, large-scale synthetic data, and human-annotated samples. Training proceeds in two stages: large-scale supervised fine-tuning, followed by online reinforcement learning (GRPO) yielding SOTA performance in interpreting UIs and performing actions on large, complex screensResults

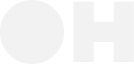

Holo2: Navigation Performance

Navigation evaluates an agent’s ability to complete real or simulated tasks through multi-step reasoning and action.Holo2 models show significant improvements in navigation efficiency and task completion rates, particularly in unseen and complex environments. Benchmarks include WebVoyager, WebArena, OSWorld, and AndroidWorld, testing the models’ abilities across web, operating system, and mobile platforms.

| Model | WebVoyager | WebArena | OSWorld | AndroidWorld | Average |

|---|---|---|---|---|---|

| Holo2-30B-A3B | 83.0% | 46.3% | 37.4% | 71.6% | 59.6% |

| Holo2-8B | 80.2% | 42.2% | 39.9% | 60.4% | 55.7% |

| Holo2-4B | 80.2% | 41.0% | 37.7% | 64.6% | 55.9% |

| Holo1.5-7B | 65.9% | 23.4% | 6.4% | 32.7% | 32.1% |

| Holo1.5-3B | 56.1% | 15.4% | 5.8% | 27.5% | 26.2% |

| Qwen3-VL-30B-A3B-Thinking | 76.1% | 45.0% | 36.6% | 62.9% | 55.1% |

| Qwen3-VL-8B-Thinking | 72.0% | 31.9% | 28.8% | 52.6% | 46.3% |

| Qwen3-VL-4B-Thinking | 67.5% | 31.5% | 24.1% | 45.7% | 42.2% |

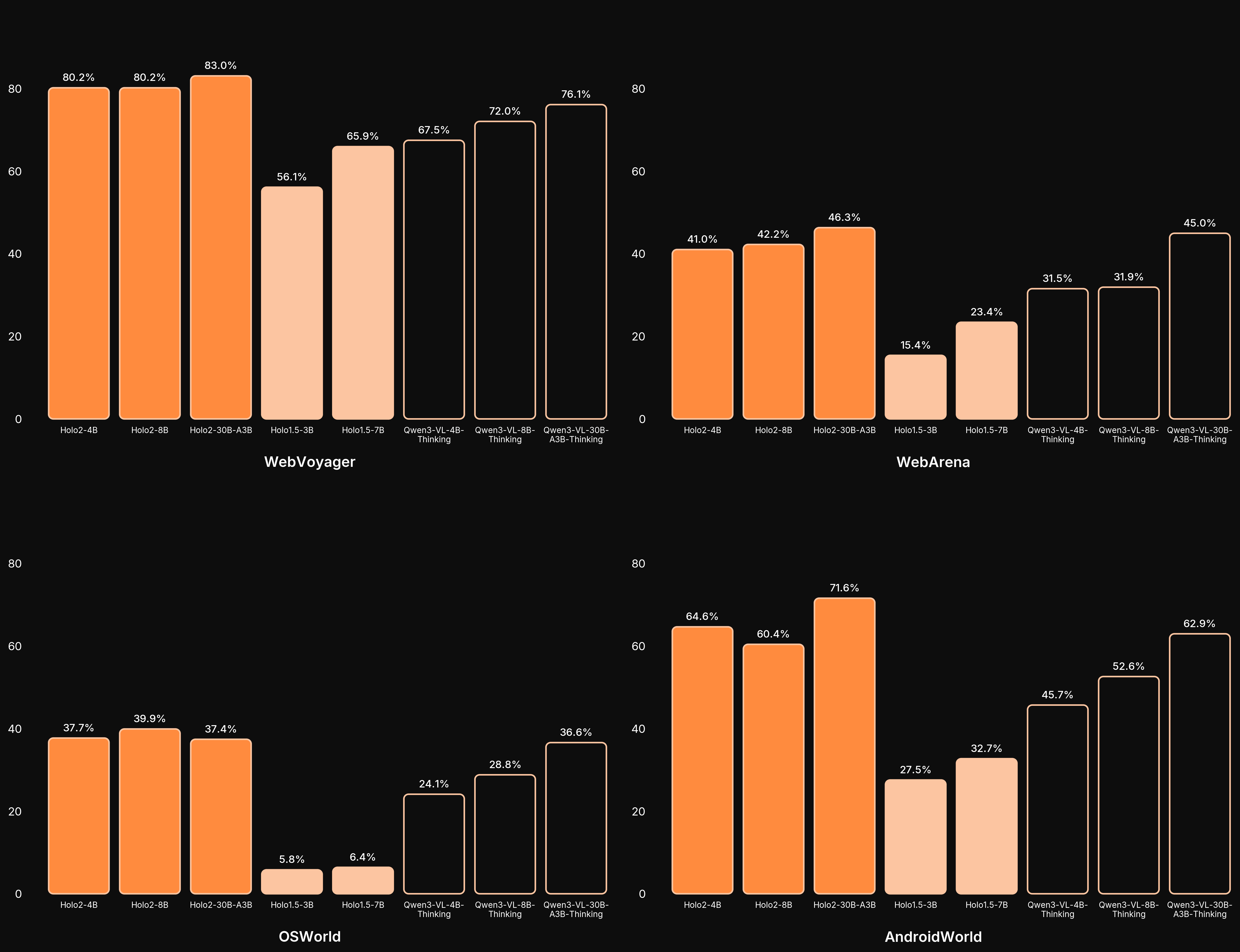

Holo2: SOTA UI Localization

UI Localization measures how precisely an agent can locate on-screen elements—buttons, inputs, links—necessary for accurate interaction. Holo2 continues to set new standards for localization accuracy across web, OS, and mobile benchmarks.| ScreenSpot-Pro | OSWorld-G | Showdown | Ground-UI-1K | WebClick-v1 | ScreenSpot-v2 | Average | |

|---|---|---|---|---|---|---|---|

| Holo2-30B-A3B | 66.1% | 76.1% | 77.6% | 85.4% | 91.3% | 94.9% | 81.90 |

| Holo2-8B | 58.9% | 70.1% | 72.5% | 83.8% | 89.5% | 93.2% | 78.00 |

| Holo2-4B | 57.2% | 69.4% | 74.7% | 83.3% | 88.8% | 93.2% | 77.77 |

| Holo1.5-72B | 63.3% | 71.8% | 76.8% | 84.5% | 92.4% | 94.4% | 80.52 |

| Holo1.5-7B | 57.9% | 66.2% | 72.1% | 84.0% | 90.2% | 93.3% | 77.28 |

| Holo1.5-3B | 51.4% | 61.5% | 67.5% | 83.2% | 81.4% | 91.6% | 72.77 |

| Qwen3-VL-30B-A3B-Thinking | 49.9% | 65.8% | 71.2% | 84.2% | 89.5% | 91.8% | 75.40 |

| Qwen3-VL-8B-Thinking | 38.5% | 56.0% | 64.2% | 83.6% | 85.9% | 91.5% | 69.95 |

| Qwen3-VL-4B-Thinking | 41.4% | 56.4% | 66.6% | 84.1% | 85.8% | 90.0% | 70.72 |

| Qwen2.5-VL-72B | 55.6% | 62.0% | 41.0% | 85.4% | 88.3% | 93.3% | 70.93 |

| Qwen2.5-VL-7B | 29.0% | 40.6% | 52.0% | 80.7% | 76.5% | 85.6% | 60.73 |

| Qwen2.5-VL-3B | 29.3% | 34.3% | 50.3% | 76.4% | 71.2% | 80.7% | 57.03 |

| UI-TARS-1.5-7B | 39.0% | 61.0% | 58.0% | 84.0% | 86.1% | 94.0% | 70.35 |

| UI-Venus-72B | 61.9% | 70.4% | 75.6% | 75.5% | 77.0% | 95.3% | 75.95 |

| UI-Venus-7B | 50.8% | 58.8% | 67.3% | 82.3% | 84.4% | 94.1% | 72.95 |

.png)